GAIA at the Sustainable AI Conference 2025 in Bonn

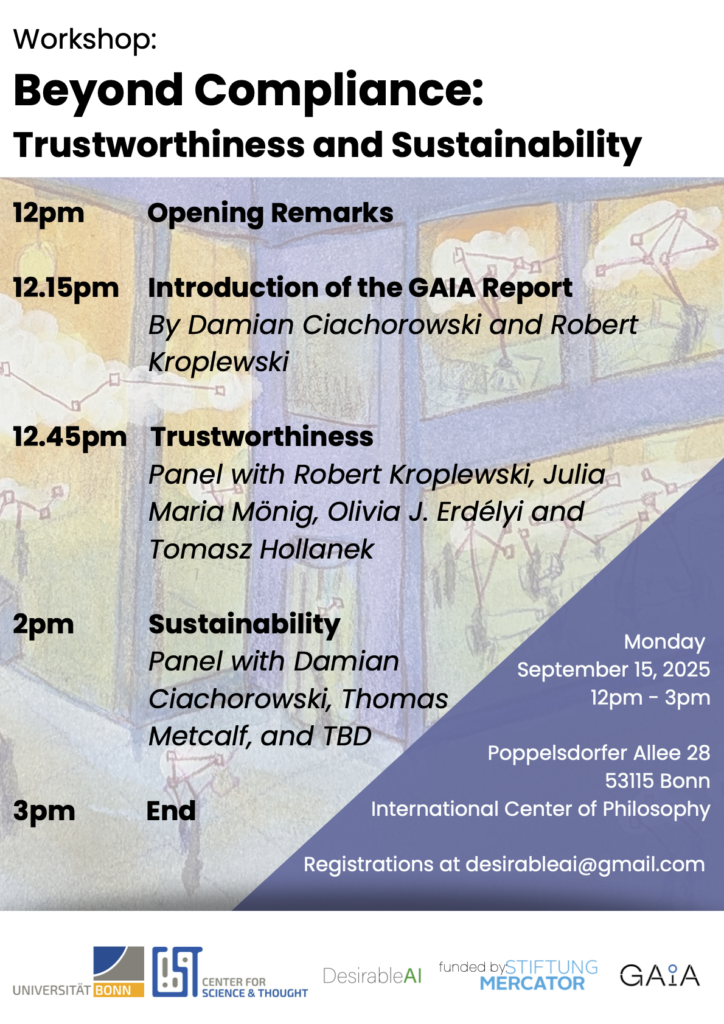

On 15 September 2025, in the historic setting of the International Center of Philosophy in Bonn, experts from across Europe gathered for a timely and forward-looking workshop: “Beyond Compliance: Trustworthiness and Sustainability.” The event formed part of the official program accompanying the Sustainable AI Conference 2025 – Shaping Sustainable AI and its Futures, hosted by the Bonn Sustainable AI Lab and the Institute for Science and Ethics.

At a moment when artificial intelligence is rapidly reshaping economies, governance, and everyday life, the workshop posed a pressing question: Is regulatory compliance enough? Or must we move beyond minimum legal standards to ensure that AI systems are truly trustworthy and sustainable?

From Regulation to Responsibility

The event opened with remarks setting the tone for a discussion that would bridge ethics, law, technology, and public policy. At the heart of the program was the presentation of the GAIA Report, introduced by Damian Ciachorowski and Robert Kroplewski.

The report challenges a narrow understanding of compliance—particularly in the context of emerging AI regulations—and argues for a broader framework rooted in systemic responsibility. Rather than treating legal alignment as the end goal, GAIA calls for:

- Transparent and auditable AI system design,

- Clear institutional accountability structures,

- Early-stage integration of environmental and societal impact assessments,

- Alignment of technological development with public values, not just market incentives.

In doing so, the GAIA Report reframes compliance as a baseline, not a benchmark of excellence.

Trustworthiness: Beyond Technical Transparency

The first panel discussion focused on trustworthiness, bringing together Robert Kroplewski, Julia Maria Mönig, Olivia J. Erdélyi, and Tomasz Hollanek.

The panelists explored what it actually means for AI to be “trustworthy.” Is transparency sufficient if it exists only in technical documentation? Can accountability be meaningful without clearly defined institutional responsibility? And how do we ensure that ethical integrity is embedded in systems from the outset rather than retrofitted after deployment?

A recurring theme was the need for interdisciplinary collaboration. Legal scholars, engineers, philosophers, and policymakers must work together if trust is to be earned rather than assumed. The discussion underscored that trustworthiness is not a feature that can simply be programmed—it is built through governance structures, public dialogue, and sustained institutional commitment.

Sustainability: Environmental and Societal Dimensions

The second panel shifted the focus to sustainability, with contributions from Damian Ciachorowski, Thomas Metcalf, and invited experts. As AI systems grow in scale and complexity, so too do their environmental and societal footprints. The discussion addressed:

- The energy demands and material resources required for training and maintaining large-scale models,

- The social consequences of automation and algorithmic decision-making,

- The structural question of whether AI supports regenerative transformation—or reinforces extractive economic models.

Participants emphasized that “Sustainable AI” must encompass environmental, social, and economic dimensions simultaneously. It cannot be reduced to carbon efficiency metrics alone. Instead, sustainability requires a rethinking of purpose: What is AI ultimately for? Whose interests does it serve? And at what cost?

A Broader Conference Context

The workshop took place within the wider framework of the Sustainable AI Conference 2025 (16–18 September 2025), organized by the Bonn Sustainable AI Lab and the Institute for Science and Ethics. The conference itself addressed foundational questions:

How can technologies support the good life in a world marked by climate crisis, social upheaval, and global instability? Is “Sustainable AI” a practical pathway—or a utopian aspiration? Speakers at the workshop, including Olivia J. Erdélyi, Damian Ciachorowski (GAIA), Robert Kroplewski (GAIA), Tomasz Hollanek, and Julia Mönig, contributed to this broader debate.

Moving Beyond the Minimum

“Beyond Compliance” made one point unmistakably clear: legal compliance is necessary—but it is not sufficient.

Trust in AI systems depends on transparent governance, credible accountability mechanisms, and meaningful public engagement. Sustainability demands that environmental and societal impacts are addressed from the earliest stages of technological development. And responsible AI requires institutions willing to look beyond short-term performance metrics toward long-term collective well-being.

By participating in the Sustainable AI Conference 2025, GAIA reinforced its commitment to advancing AI that is not only innovative—but ethically grounded, environmentally conscious, and socially aligned. In Bonn, the conversation moved decisively forward: from rules to responsibility, from compliance to commitment, and from technological capability to human-centered purpose.